Asymptotic Notation

Consider the example: Given number \(n\) , write a function to find sum of first \(n\) natural numbers.

Some solutions (Python):

-

def sum1(n): return n * (n + 1) / 2Total work done: \(c_{1}\) and it is not dependent on \(n\) .

-

def sum2(n): sum = 0 for n in range(n + 1): sum += n return sumTotal work done: Some constant work and a loop that executes n times: \(c_{2}n + c_{3}\) .

-

def sum3(n): sum = 0 for i in range(1, n + 1): for j in range(1, i + 1): sum += 1 return sumThe inner loop executes:

- once for

i = 1 - twice for

i = 2 - thrice for

i = 3

So the total work done is:

\( 1 + 2 + 3 + ... + n \\ = n * (n + 1)/2 \\ = c_{4}{n^2} + c_{5}n + c_{6} \) - once for

It’s a measure of the order of growth of an algorithm in terms of its input size.

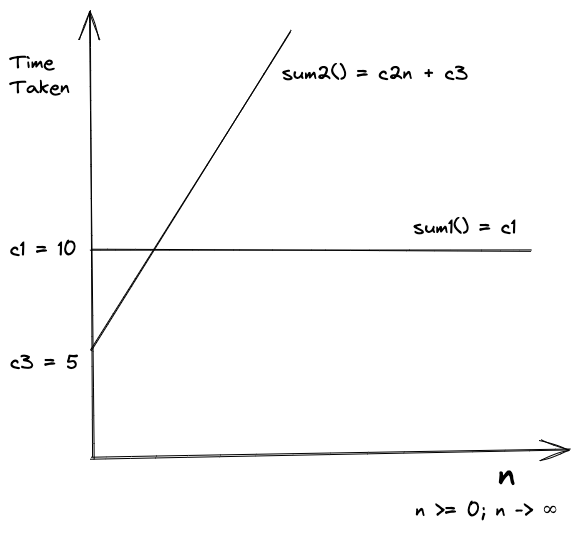

Let’s compare the growth of solutions 1 and 2.

Assuming the values of constants as per the figure below, let’s find \(n\) .

So, for any

\(n >= 3\)

the constant function sum1() will always be faster than sum2().

A function \(f(n)\) is said to be growing faster than \(g(n)\) if

\[\text {for $n \geqslant 0, f(n), g(n) \geqslant 0 $} \\ \lim\limits_{n \to \infty} \frac{g(n)}{f(n)} = 0\]Example:

\(f(n) = n^2 + n + 6 \hspace{20px} g(n) = 2n + 5 \\ \lim\limits_{n \to \infty} \frac{2n + 5} {n^2 + n + 6} \\ \small \text{dividing by highest term i.e. } n^2 \hspace{10px} \lim\limits_{n \to \infty} \frac{2/n + 5/n^2} {1 + 1/n + 6/n^2} \\ \small \text{with n tending to } \infty \hspace{10px} \lim\limits_{n \to \infty} \frac{0 + 0}{1 + 0 + 0} = 0\)So, if \(f(n)\) and \(g(n)\) represent time complexity of algorithms i.e. order of growth, \(g(n)\) is a better algorithm.

- Ignore lower order terms.

- Ignore leading constants.

Faster growing function dominates a slower growing one.

Common dominance relations: \(C < \text {loglog } n < \text {log } n < n^{1/3}< n^{1/2} < n < n^2 < n^3 < 2^n < n^n\)

Let’s Consider some examples:

-

def nsum(arr, n): sum = 0 for i in range(n): sum += arr[i] return sumThis function is linear.

-

def nsum(arr, n): if n % 2 != 0: return 0 sum = 0 for i in range(n): sum += arr[n] return sumBest Case: When n is odd, it’s going to take constant time.

Average Case: Often it’s impractical to calculate unless you know all the inputs that will be provided to the algorithm all the time.

Worst Case: When n is even it will be linear.

Worst Case is considered the most important case for algorithm analysis.

Cases are not related to notations. You can use the any notation for any case.

Big O: Represents exact or upper bound i.e. order of growth.

Big Theta (𝛳): Represents exact bound.

Big Omega (Ω): Represents exact or lower bound.

Big O is the most common notation used.

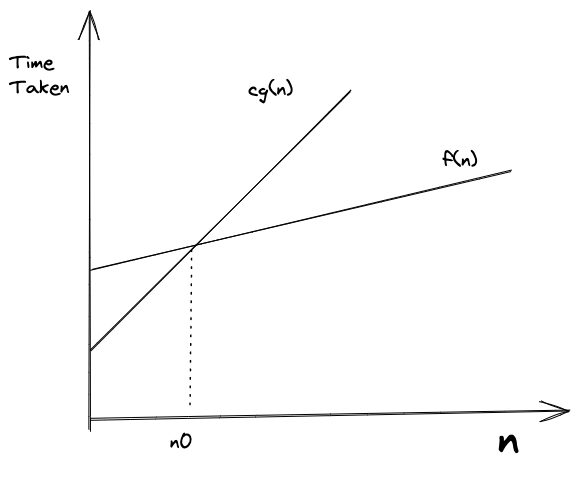

\(f(n) = O(g(n))\) if and only if there are exact constants \(c\) and \(n_0\) such that \(f(n) \leqslant cg(n)\) for all \(n \geqslant n_0\) .

In simple terms, we want to find a function \(g(n)\) that is always going to be equal to or greater than \(f(n)\) when multiplied by a constant for large values of \(n\) .

Example:

\(f(n) = 2n + 3\) can be written as \(O(n)\) after ignoring co-efficient of highest-growing term and lower-order terms.

Since \(f(n) \leqslant O(g(n)\) , equating it to above gives us \(g(n) = n\) .

Let’s prove it mathematically:

\(f(n) \leqslant cg(n) \space \forall \space n \geqslant n_0 \\ \text{i.e.}\space(2n + 3) \leqslant cg(n) \\ \text{i.e.}\space(2n + 3) \leqslant cn \space \because g(n) = n\)Quick way to find the value of c is take leading constant of highest growing term and add 1.

\(\therefore c = 3 \\ \small \text{Substituting} \space c \space \text{to find the value of } n_0 \\ 2n + 3 \leqslant 3n \\ 3 \leqslant n \therefore n_0 = 3 \\\)So for all values of \(n \space \geqslant 3\) , the equation \(2n+3 \leqslant 3n\) holds true.

If we try putting some values, say \(n = 4\) in above equation, we can observe it holds true. Hence proved.

Some more examples:

- \(\{\frac{n}{4}, 2n + 3, \frac{n}{100} + \log n, 100, \log n, ...\} \in O(n)\)

- \(\{n^2 + n, 2n^2, \frac{n^2}{100}, ...\} \in O(n^2)\)

Since Big O is upper bound, all functions in 1 can be said to belong to 2, but it helps to use tight bounds.

Big O gives the upper bound. If we say an algorithm is linear, then the algorithm in question is

\( \leqslant O(n)\) . So, it's going to perform linearly in worst case scenario or better. Therefore Big O is the upper bound of the algorithm.

\(f(n) = \Omega(g(n))\) iff there are exact constants \(c\) and \(n_0\) such that \(0 \leqslant cg(n) \leqslant f(n)\) for all \(n \geqslant n_0\) .

- Big Omega is exact opposite of Big O.

- Big Omega gives the lower bound of an algorithm i.e. the algorithm will perform at least or better than it.

- Example - \(f(n) = 2n + 3 = \Omega(n)\)

- \(\{\frac{n}{4}, \frac{n}{2}, 2n, 2n+3, n^2 ...\} \in \Omega(n)\) i.e. all the functions having order of growth greater than or equal to linear.

- If \(f(n) = \Omega(g(n)) \space \small{then} \space g(n) = O(f(n))\) .

\(f(n) = \Theta(g(n))\) iff there are exact constants \(c_1, c_2, n_0\) such that \(0 \leqslant c_1g(n) \leqslant f(n) \leqslant c_2g(n) \space \small{for all} \space n \geqslant n_0\) .

- Big Theta gives the exact bound on the order of growth of a function.

- Example - For \(f(n) = 2n + 3 = \Theta(n)\)

- If

\(f(n) = \Theta(g(n))\)

then

\(f(n) = O(g(n)) \text { and } f(n) = \Omega(g(n)) \\ g(n) = O(f(n)) \text { and } g(n) = \Omega(f(n))\) - \(\{\frac{n^2}{4}, \frac{n^2}{2}, n^2, 4n^2, ...\} \in \Theta(n^2)\)

- We should use big 𝛳 notation whenever possible.